But enough of the complaining. This week, I’m going to put the boot into the government’s School Performance tables. Whereas my last blog looked at the simple-but-ridiculous Ofsted Schools Data Dashboard, which has next to no data and tells you nothing useful, this week’s evidence of stupidity in the field of data has a whole lot more data. It’s a bloated, here-are-all-the-numbers-we-could-find monster which needs to be looked squarely in the eye and told how utterly meaningless it is. It's a bully and it's dangerous. So let’s put the bully down.

Have a look. Once again, pick a school, any school. Once again, I've looked at Primary Schools, because that's the age group I work with. If you work or have children in Secondary, let me know what you think of the data available. At primary level, marvel at the sheer amount on data available to you, from number of pupils to percentage of children with English not as a first language, to average gross salary of all full-time qualified teachers in a school via average point score and more. See how big this monster is?

There are, in fact, over five hundred separate items of information available to you. Not that I’ve counted them, because those behind the data dump – which is effectively what it is, in all of the meanings of the word – have produced an excel file for you to download and play with, and it has 506 fields in it. I had a quick look at the Secondary data and that contains over 1000 fields of data, a huge chunk mercifully blank. The horror...

Why on earth you need all this information is a hugely moot point. Do you think that knowing the ‘Teaching staff and Education support staff expenditure’ over the past four academic years is useful to you or anyone else? What might ‘ICT learning resources’ spend per pupil tell you? How are you going to use the knowledge that ‘low attainers’ don’t do as well as ‘high attainers’? Hadn’t you guessed that already?

Who produces this stuff? And how much do they get paid? Because whatever it is, you and I should ask for our money back because its a monstrous waste of time, effort and money.

Let's get down to business

When looking at the data available, the three things which jumped up and down demanding attention were our old friend ‘Similar Schools’, the almost total lack of historical data and the ‘Value Added Measures.’

Oh no, those ‘not at all similar schools’ are back…

Now, I ranted about ‘Similar Schools’ last week in the Ofsted Schools Data Dashboard. I argued that – based on the information available on the OSDD website – the similar schools measure was tosh of the highest water. As far as I can/could tell from the OSDD, Ofsted’s idea of a similar school is one in which the children who were assessed in Year 2 were assessed at similar levels. I’m still not sure about this – it may be those in year 3, or it could be all of the children in Key Stage 2 - the supporting documentation on the OSDD site is unclear. But, either way, what I have found out about the way the performance tables select 'similar schools' is much, much more worrying.

A statistician writes

Now, as I said, I trained as a statistician. I’ve done the equations, wrestled with significance, errors and variance, and studied the thinking behind the mathematics of crunching data. It’s a fascinating field, and it’s maths at a high level. But it isn’t entirely easy to follow. That said, the study of statistics teaches you some important fundamentals about attempts to simplify complicated data.

The first thing to be said is that statistics can’t tell you anything, as such. Using statistics can help to summarise likelihoods and probabilities, and give you some insight as to whether things are likely to be similar or not. But statistics can’t say ‘if y then x’ – that’s algebra, and that’s a lot simpler, on many levels. As a statistician you can’t simply say, for example, that a child who was assessed as reading at level 2B in Year 2 is estimated to get level 4B and then actually expect that to happen. Statistics doesn’t work like that.

The child might get level 4B, but then again, they might not. That’s life. It doesn’t all work to plan. Yes, you can look at all the children in a given year 6 who did get assessed at level 4B, and work out how many were assessed at level 2B four years earlier. And you can look at the assessments made for all the other children in Year 6, and you can crunch the data, and add in all kinds of funky maths to then provide a best fit model which says, yup, 3B is likely to get you 5B, 1B is likely to get you 3A.

Past performance is no guide to future performance

But that doesn’t mean that you can say that this will definitely happen in the future –statisticians who do use this kind of probability analysis use the concept of ‘statistical error’ to accept that, you know, life is often random and we don’t really know. When you work with probability, you have to account for error; the more assumptions you make, the more error you have to account for.

I won’t bore you with too much detail. Here's a link for those who aren’t scared of equations. But basically, the Schools Performance Tables ‘Similar Schools’ are the result of bad mathematics and a fundamental misapplication of statistical analysis. To give them their due, they explain fairly comprehensively how they select schools and put Ofsted to shame for their woefully inadequate supporting documentation. But its still nonsense.

Ignore this bit if you need to

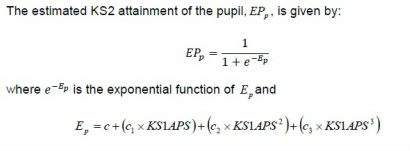

Essentially what happens is this. Make a big list of all the Average Point Scores at Key Stage 1 for all the children in a school’s Key Stage 2. Ignore the fact that you have just created mean averages of three different numbers to create an APS, and introduced huge statistical errors into your calculation. Feed each child’s APS into an equation for which you have derived constants based on one year’s Year 6 results:

And then, oh yes, simply produce a list in numerical order of the ‘school level’ estimates for all the schools in the country, and pick the 62 schools which sit immediately above and below your school. Those schools are, according to the government, similar. Which is rank nonsense of the highest order.

Random is as random does

The list may as well be chosen randomly for all that this tells you. In fact, to all intents and purposes the list is random, and a look at any school's 'similar schools' will show you. There is no regular pattern for schools in general, other than to say that the data crunching provides no pattern which is exactly what you would expect if it was random. If this method of selecting schools showed any kind of non-random distribution of school results at Key Stage 2, it might be of interest, but there is simply nothing there. Some schools get higher Average Point Scores and some don't. That's it. The 'similar schools' are not similar and to suggest so is entirely misleading.

The Department for Education then has the temerity to suggest that the ridiculous 'similar schools' measure 'is designed to give more information on the context schools are working in and help them identify local schools working in similar circumstances that they can collaborate with and learn from.' Which is complete and utter rubbish, and I pity any school which has been daft enough to waste a iota of time linking up to a random school based on this tosh.

Welcome to the wonderful world of disappearing historical data

The second thing which jumped about trying to get my attention is the fact that the Performance Tables at Primary level contain data for twice as many years as the OSDD. Yes, that’s right, they give you data for two years instead of just one! But only for headline data on ‘Percentage achieving Level 4 or above in reading, writing and maths.’ Aww! I wanted to know whether the school had been good for a few years, not just two, since, you know, maybe I’m a parent using this data to select a school for my four year old, and they aren’t going to take Key Stage 2 assessments for eight years, so I need at least that mount of data to make my decision, don’t I?

For all the data in the dump, I get just one year. Yes, for ‘Percentage making expected progress in writing’ I get just one year. Yup, for the 42 other measures of pupil performance I only have one year’s results. Uhuh. I like my favourite football team, but I don’t think that their data for last season is going to tell me much about performance in future years. I’m not even sure that their record over the last eight seasons would tell me much either…

And that’s why the Performance Tables only give you one year. Because it tells you nothing about anything other than that group of Year 6 pupils, as would the last eight year’s worth of data. Because there’s no trend from year to year.

Would you like an example? I once spent a wet weekend in Bridlington, so I chose a school there to look at. I found a fairly typical two form entry school. These are the percentages of children achieving Level 4 or above in reading, writing and maths:

2013: 77%

2012: 70%

2011: 58%

2010: 69%

2009: 77%

2008: 76%

See the pattern? No? That's because THERE ISN'T ONE. There never is, for any school, in any historical data. Because the data is based on children's results and children are complicated and individual and there aren't enough in any given school to generalise.

The lack of a trend may be why the data isn't presented over a number of years, because anyone looking at the data would realise that it is random. As any financial advert will tell you in the small print, past performance is no guarantee of future success. Long term trends in something as complicated and complex as educational outcomes are - unless you mess with the data by, you know, making the tests easier, selecting by ability or dis-applying certain children from assessment, or simply not reporting stuff - see future blogs for each of these devious methods of gaming the system - always random.

Add no value

The final thing to consider is the wonderful world of Value Added Measures. Now, these were all the rage a while ago, and were known as Contextual Value Added scores. Older readers may remember them, and why they were introduced. For the benefit of those who don’t know, or have forgotten, they came about because, when the government started publishing data showing how children performed in assessments in England and Wales in the mid 1990s, people began to notice something about schools. Some schools got really high average grades, and other got really low average grades.

The schools with high average grades were predominately in well off areas. And the schools with low average grades? In the poor areas. And why was this? Because school is the icing on the top of… Dang. I’ve said that before. Yes, context matters, and schools said so loudly and the government, to their credit, listened.

What they did, however, was a disaster. Contextual Value Added is demolished by Professor Steven Gorard in this paper. It ended up being so complicated its statistical errors were up in the 1000s of percents, and it simply told you nothing.

The latest incarnation, which is also meaningless, is Value Added and it tries to find a ‘school effect’ which shows how much the school added (or subtracted from) a pupil’s expected Key Stage 2 result compared to other schools based on their Key Stage 1 result. The answer is ‘almost nothing’ because… did I mention the icing and the cake?

Oh Lord, they’re at it again

So there you are, another set of hopeless data. Schools which aren’t similar. Data which has no depth or meaning. Which no one would use to make any judgements about a school, or its leaders or its teachers. Would they?

As long ago as 2008, Steven Gorard was telling anyone who would listen that Ofsted start with data and ‘such figures may mislead parents, governors and teachers and, even more importantly, they are being used in England by OFSTED to pre-determine the results of school inspections.'. In 2013, writing about Value Added measures he said, ‘All those schools and individual teachers negatively affected by their Value Added results may have been treated unfairly. Parents should not have been encouraged to choose, nor inspectors to judge, schools on this basis.’

The same could be said for the data contained in the Performance Tables. It tells you virtually nothing and is based on spurious analysis undertaken by people who really should know better. Education is far too complex an issue to be reduced to a number, no matter how fancy the maths. Time to put the Performance Tables data monster to sleep.

RSS Feed

RSS Feed