From numerous rewritings of the School Inspection Handbook (SIH), to a complete reorganisation of the Inspector pool and a newly integrated inspection framework, Ofsted 2015 looks very different to the 2010 equivalent. Whilst the fear of inspection still remains in many schools, and unease about the organisation has been widespread, Ofsted has made great efforts to open up and face criticism head on, and for this they should be congratulated.

Whilst further evolution is clearly required, not least to head off change being imposed centrally (one only has to read Dominic Cummings’ splenetic criticisms to get some sense of the mood within Whitehall), of late Ofsted has been more Frazier than Voldemort.

In that vein, I’ve been having occasional discussions with Sean Harford and some of his statisticians following a meeting last year, and we found the time to have another gathering at Ofsted HQ on Friday 4th September. I corralled together a group of data-types including Jamie Pembroke, Yorkshire Steve and Peter Atherton, and Sean Harford and some Nameless Ones sat down with us to share developments within Ofsted and to discuss the Inspectorate’s use of numbers.

I will start by saying that, from all that Sean Harford said to us, he has once again shown that he is determined to deal with any instances of Inspectors not following the SIH, and he is open to anyone contacting him to let him know of any concerns they might have should this be the case. In short, if you have a concern, ask Sean.

Since the reorganisation of the Inspector pool late last year, all inspectors have had training on the use of numbers in schools. I’m using 'numbers' here, because Sean was very clear that the Inspectorate is encouraged to think about ‘information’, rather than ‘data’, when reviewing the current position of a school prior to and during inspection. Yes, Inspectors will look at outcomes for students and will look at historical information which is available prior to inspection, and they will also look at current information which schools can provide when Inspectors come to call. As Sean is fond of saying, this should be a signpost, not a direction.

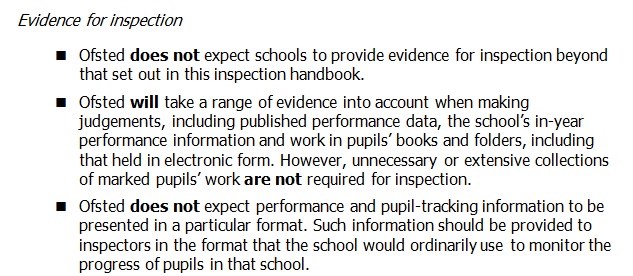

As many will know, the SIH is very clear that Inspectors will not expect performance and pupil-tracking information to be presented in a particular format:

At this point, I’m going to be Primary-specific before getting to Secondary-specific issues. Primaries have borne the brunt of the turmoil caused by the removal of levels without any statutory replacement, and the Kafka-esque nightmare of dealing with two completely different curricula for – on the one hand - years 1, 3, 4 and 5 and – on the other - years 2 and 6 on the other. For all the criticism of levels-as-assessment-criteria – and goodness know there were lots – simply removing them was an extraordinary decision which has had and will continue to have long term consequences for the sector. In many ways, the crisis this created – and it is an entirely manufactured crisis, whether by design or accident – has overwhelmed the more salient debates about whether learning is visible and whether we can track and compare progress using some sense of linearity at all. But I digress.

Within all the chaos caused by removal of a bad assessment system and the imposition of a new curriculum, primaries have, unsurprisingly been extremely wary of the ‘freedoms’ these changes have imposed, and schools have ended up, for the most part, simply replicating or retaining levels in the absence of any clarity from those charged with central education policy.

Whilst an Assessment commission has been set up, has had time to consult, and has come up with what looks like a fair summary of the mess the Primary sector finds itself, the unpublished report is now overdue and schools are once again having to hope that Inspectors like what they see when they come to call.

As Sean Harford emphasised, Inspectors should be looking at how schools have developed their curriculum and assessment systems in response to the removal of levels and the new curriculum. It remains to be seen how Inspectors on the ground react to the multi-faceted landscape which we now have. Sean Harford is positive that Inspectors will be open to a multitude of approaches, and we will have to hope that this is the case.

At Secondary level, the removal of levels has also caused many sleepless nights, although not quite to the extent it has in Primary. It has, however, coupled with the move to Progress 8, had a huge impact on curriculum design. Progress 8 has also had a major impact on the subjects schools offer to their pupils. I’m no secondary expert, so I’ll leave this to others, but we did discuss the positives, and some teething troubles, of Progress 8 and Assessment 8.

As many readers will know, one of my long standing issues with Inspection is with the use of test score data, and the way this impacts inspection. I’ve written, often intemperately, about the misuse of statistics as the government seeks to compare schools both with each other and with a national picture. The main criticism I started with was the use of tests of statistical significance with RAISEonline, and the effect a series of green and blue boxes has on those whose understanding of numbers may not be too secure.

The last time I met Sean, I was encouraged to hear that some of the concerns I had raised were understood by statisticians with whom he works. It’s also been good to see some public debate about using significant tests to compare non-random samples. Whilst there is always the quiet warning that you should be careful what you wish for (which was amplified via a stadium sized PA system for those of us who thought the use of levels for assessment was a bad idea – look what happened there…), I’m pleased that the discussion about Green/Blue is happening, and it’s clear that the debate will continue within Ofsted and the DfE.

One encouraging development is the new Inspection Dashboard, unveiled recently within RAISEonline. Whilst the detail needs scrutiny, it presents information in a much more clear format than previous summary reports. There are issues, however. The use of the terms ‘significantly above’ and ‘significantly below’ are still Not Even Wrong, and there is still work to be done to help those who struggle with the whole concept of a Null Hypothesis Significance Test to understand what they actually mean. But that discussion will have to happen on another day.

One serious criticism of the previous Inspection Framework was that the 'Attainment of Pupils' grade drove both the 'Quality of Teaching' and the 'Overall Effectiveness' grades of a school. This lead in many cases to teaching being lauded or damned based on the pupil outputs of often very small cohorts of children, especially in Primary. It remains to be seen how closely the new ‘Quality of Teaching, Learning and Assessment’ links to the new ‘Outcomes for new pupils’ grade.

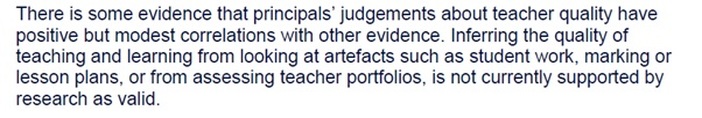

Whilst Sean was at pains to insist that both the old ‘Achievement of Pupils’ and the new ‘Outcomes for new pupils’ aren’t directly driven by the amount of green or blue in a school’s RAISEonline report, the Inspectorate – along with many people in education - still clearly hold teachers directly accountable for the outcomes of the pupils they teach. This is a point which simply isn’t based on current research, for reasons which I discussed in my session at ResearchED the day after the meeting. Whilst I disagree with Sean on this, his is certainly the more mainstream view as things stand, and I await the first set of Inspection reports under the new framework with interest.

All I will say is that we have removed the grading of lessons because it became clear that observations of teaching and teachers were unreliable and not grounded in research. If research says that pupils outcomes are based on a multitude of factors, of which teaching input is a relatively small and fairly consistent factor, and that we really aren’t sure what great teaching looks like, then judging teachers and teaching is unreliable and not grounded in research. The following extracts from the Sutton Trust’s ‘What is Great Teaching?’ make this clear:

Thanks, as always to the team at Ofsted and the DfE who made the time to meet with us, and to all those who provided input prior to the meeting, not least Becky Allen of FFT Datalab who has been a fantastic sounding board for my thoughts on Inspection, data and information about schools.

Jamie Pembroke has written up his thoughts on the meeting here.

RSS Feed

RSS Feed