As I said in October 2014, “It turns out that what we know now as the Data Dashboard was developed at Ofsted’s behest because RAISEonline is so difficult for non-statisticians to read. Governors struggle to understand what RAISE says about attainment and achievement, and the dashboard was an attempt to simplify the data.”

It is a pity that this decision was made, not least because, as David Didau rightly points of ‘nobody ever rises to low expectations’. So yes, school data is difficult to interpret, but having something which simplifies what is available about schools in a way which isn’t exactly helpful may actually be doing more harm than good.

What’s more, as I’ve pointed out, the Dashboard has ‘no memory and no pattern’, and it’s highly misleading for those – such as prospective parents who might want to glean some idea as to the long term academic performance of a school, for example – who are given such limited information.

Having made my case regarding the misuse of statistics in education, particularly those statistics based on highly unreliable, ‘fuzzy’, Not Even Wrong test scores, this year I’d like to look at the non-test score data available via the Data Dashboard, which also has to be treated with some caution. I’ll come to that in a bit.

So, what’s new for 2015? Well, as previously, it’s hard for a non-nerd to tell, since the previous versions of the dashboard have been removed once again. Even I’m not anal enough to have archive the whole of the previous website, but as far as I can tell, here’s what’s different:

- A link to the similar schools methodology has been put next to each ‘List of Similar Schools’.

- The wording in the Similar Schools methodology has changed a little, with ‘English Baccalaureate (Ebacc)’ replacing ‘Ebacc’.

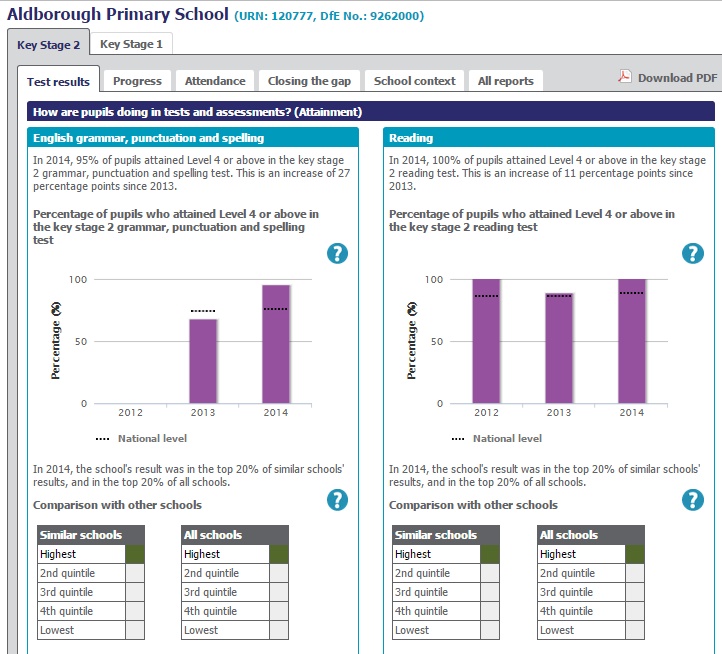

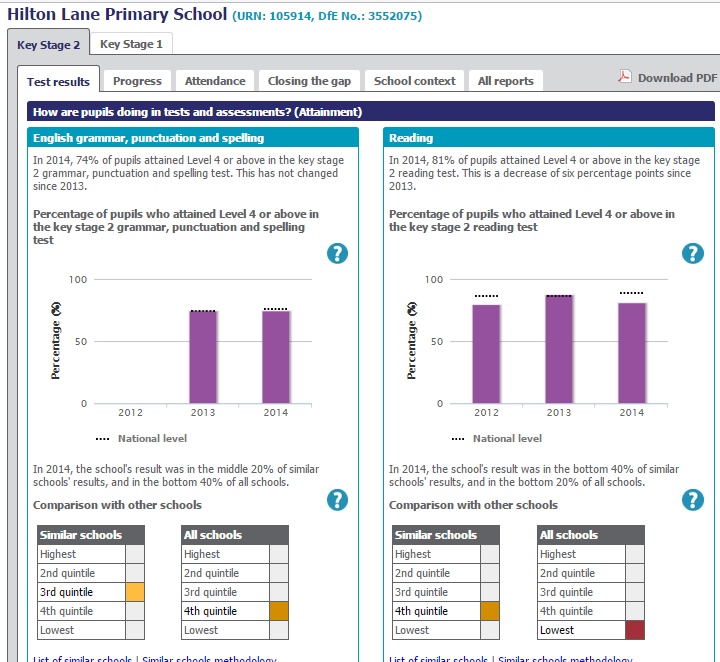

- We now have a whole two years of data for ‘English grammar, punctuation and spelling’ (after having just the one last year). This, for those who don’t follow Education Data obsessively, is because of wholesale changes to KS2 assessment, which made comparisons with past data impossible. So we’ve doubled the information available. Yay. Or not.

Other than this, nothing much has changed. The lists of similar schools (124 in primary, 54 in secondary) are still effectively random, and I’d still be amazed if anyone has used them for their intended purpose, which is to enable schools to make links with other schools which are ‘similar’ but ‘better’. The DfE does this on its Performance Tables website, although they flag schools which are both ‘similar’ and ‘better’ and within 75 miles, should a school be daft enough to waste time finding out what little they might learn from another almost-certainly-entirely-different school.

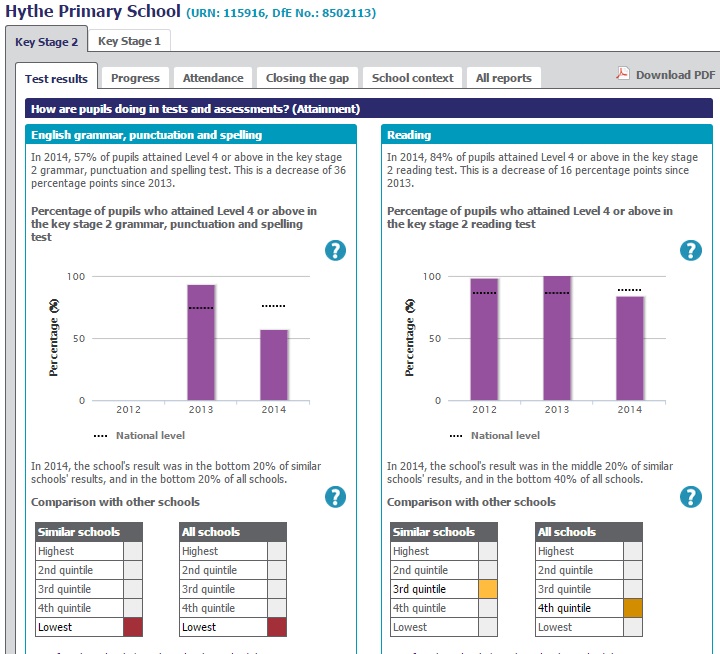

So how are the schools I highlighted in 2013 and 2012 faring? Well, here’s the 2014 data for Hythe Primary School in Southampton:

Mind you, there could be a zillion reasons for the data looking like it does. A quick look at the ‘Closing the Gap’ charts seems to suggest that what actually happened was the composition of the Year 6 class changed dramatically in 2014 compared to 2013. I bet they had some long and very frustrating discussions with whoever inspected their school.

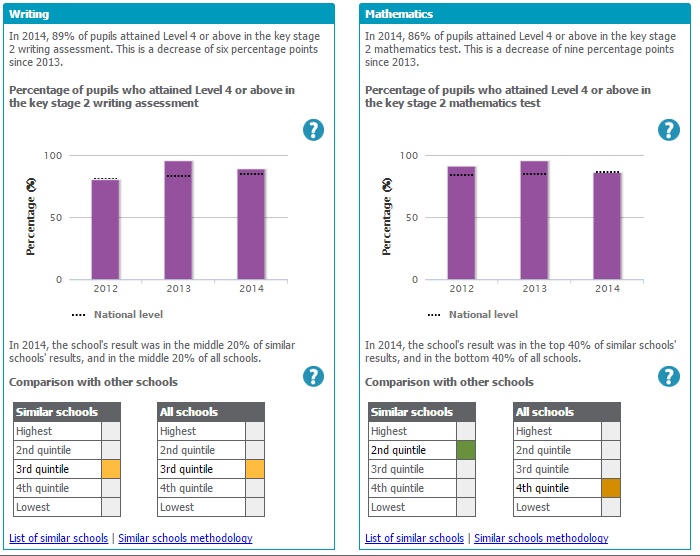

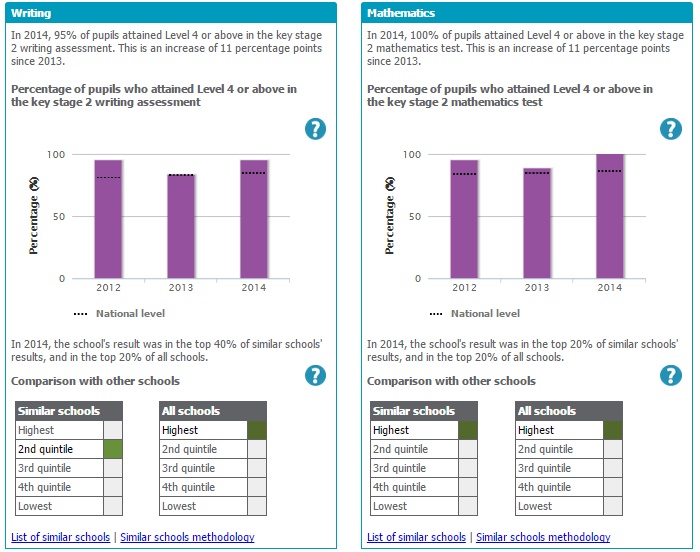

Here’s the data for Aldborough primary School in 2014:

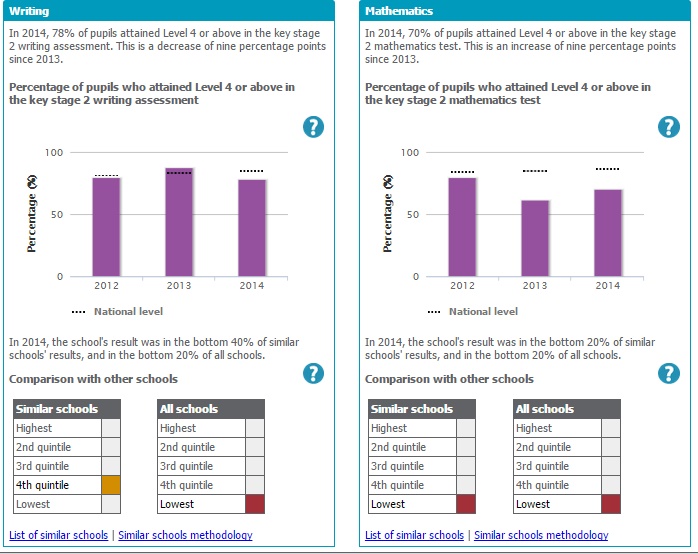

The other school I picked out of a hat to keep an eye on is Hilton Lane in Manchester. Here they are in 2014:

This year is the last in which we will have Reading and Maths reported using the current KS2 SATs assessment framework, so we will probably see another one of these Dashboards in 2016. I’d suggest that Ofsted thinks long and hard about how it replaces what is current published.

As I’ve said previously, the main purpose of the dashboard is, officially at least, to assist Governors with the following ‘key questions’:

1 Is this the picture that you were expecting?

2 Are standards rising in reading, writing and mathematics at Key Stage 1 and Key Stage 2?

3 Are standards rising in English, mathematics and science at Key Stage 4?

4 How is your school performing compared with other schools with a similar intake of pupils?

5 Are there differences between groups of pupils?

6 How is the pupil premium funding being used and is it making a difference?

7 What is your school doing to make sure that all pupils make at least the progress that is expected?

8 Has attendance improved over the last three years?

And as I said last year, ‘Of these questions, the only one which the Data Dashboard could possibly help with is the final one about attendance (8). Schools might, at a push, be held to be solely accountable for this. All of the other questions are either almost entirely dependent on the individual children in given cohorts (2, 3, 5) or impossible to answer because of demonstrably false assumptions about what data can tell you (1, 4, 6, 7).’ I stand by that.

So what about the non-test score data in the Dashboard?

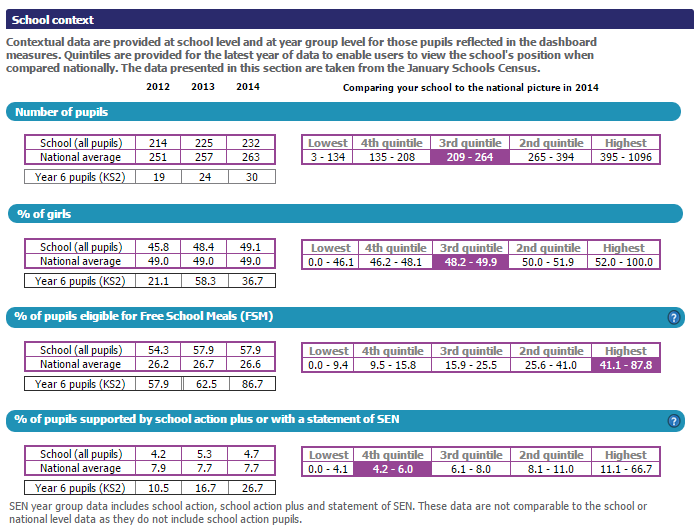

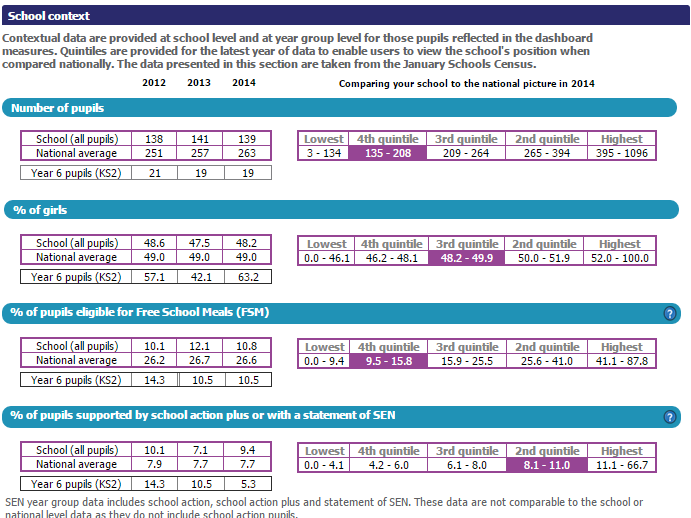

I didn’t look at the ‘School Context’ tab on the Dashboard last year, for reasons outlined above. I suggest that you do look closely at this example, from Hilton School:

The other data here – the numbers of children eligible for FSM or recorded on a SEND register - is simply ordinal data (an issue I spoke about at the Festival of Education). Why isn’t it interval or ratio data? I’d hope regular readers will have immediately noticed that there is no regular interval between each child eligible for FSM or recorded on a SEND register, because each pupil may have greater or lesser needs not captured in these headline figures. All numbers are not the same, after all. And that means that, whilst it is possible to calculate a mean, as has been done at a national level, that’s a pretty meaningless statistic since the FSM/SEND data is simply ordinal data. An ‘average’ of 26.6 FSM can’t really be compared to an ‘average’ of 26.7 FSM, for reasons which should be obvious.

What’s more, most primary schools have less than 100 children in a school year, so using percentages makes small differences look a lot bigger than they actually are. Saying that 63.2% of a class are girls compared to 36.7% of another is not a clear as saying that class A had 12 girls and Class B had 11, and the same classes had 19 and 7 boys respectively.

So how is the information about FSM and SEND helpful when placing the schools in context? Remember that Hilton Lane has seen a “dip in EGPS, a dive in Reading and Writing, and they are still bumping along the bottom in Maths.” This data might help explain why this might have happened. The school had 26 pupils who were classified as officially poor – those in receipt of Free School Meals (FSM) - in their Year 6 class in 2014 – whereas there were too few non-FSM children to be able to make meaningful comparisons between the FSM/Non-FSM groups in 2013, when 9 children out of a class of 24 were not FSM eligible. Now, whilst FSM is just one measure of disadvantage, and not a particularly good one at that, this tells you something, especially when you compare this to Aldborough Primary School, who had two Year 6 children in receipt of Free School Meals in 2014’s Year 6.

Looking at Special Educational Needs and Disability (SEND), Hilton Lane had 8 out of 30 children with learning difficulties in 2014 (compared to 4 out of 24 children in 2013). This compares to Aldborough’s Year 6 which had one child in 2014 and two children in 2013 who had additional needs. It is somewhat different when one in four children has additional needs compared to one in ten, even if those needs might be very different depending on the children involved, and this information is certainly useful when considering the challenges the school faces.

Unfortunately, much of the detail I’m picking out is likely to be drowned out by the headline figures, and presenting nominal data in percentages and with means is potentially misleading.

So where does that leave us?

With its reliance on test score data to summarise a school, the Data Dashboard was always going to give a misleading impression of a school, for reasons I’ve explained at length elsewhere. Showing just three years doesn’t enable those reading the dashboard to see just how randomly distributed school data is, and opens up the very real possibility that readers will make assumptions - both positive and negative – which don’t actually reflect the situation in any givens school.

Of course, Ofsted will say that this is why they visit schools, as they need to look behind the numbers. This clearly hasn’t been true in the past, since schools like Aldborough Primary haven’t had a visit since 2009. Whilst the new inspection framework means that schools with ‘good’ data will be visited more frequently, halo effects and a limited time in school will mean that Ofsted Reports will continue to reflect the misleading assumptions inspectors are forced to make based on data.

It is a pity that useful data is presented in percentage form, and that the fuzziness of FSM and SEND data is not presented with an indication of its imprecision. The concerns I raised last year regarding the misleading interpretation of quintile data still stand.

Ultimately, data like this needs to be treated with huge caution. I hope that the cautions I have outlined here are useful when you next look at a sea of green or red.

RSS Feed

RSS Feed