Much of the deeper thinking about education of late has been about how we gather, process and use evidence, and how we move towards being a more evidence-informed profession. The idea that we are all affected by our own biases is finally gaining traction, as is the idea that we should embrace uncertainties rather than assume that there is objective truth to be found within numbers in education. As a hotly contested area of public life, however, we also see a lot of 'evidence' in education which is clearly directed by policy and prejudice, and it isn't unusual to see stuff which has pretty much been made up (and often cloaked with large degrees of obfuscation) to suit a particular agenda, or which ignores research which doesn't fit with a particular world view.

Roll up for the Education Data Pub Quiz!

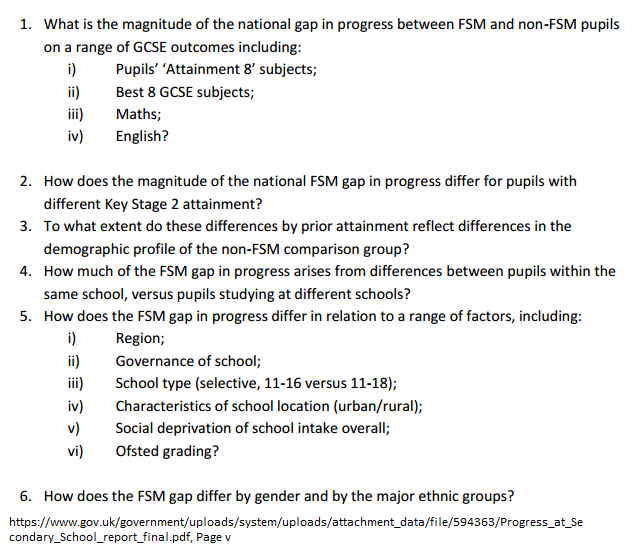

First up, a mixed bag: The Government’s Social Mobility Commission’s ‘Low income pupils’ progress at secondary school’ Report. If you are a Head Teacher, member of a school Senior Management Team, MAT CEO, researcher or politician pontificating about education, you really should have a good idea of the answers to the following questions:

- i ) Quite big

iii) Quite big

iv) Quite big

2. It’s all quite big, regardless of prior attainment.

3. The less deprived the intake, the higher the progress .

4. Within school is around 90%, between schools around 10%.

5. i) London is smaller, everywhere else is similarly large.

ii) Sponsored academies smaller, Converter academies bigger, Faith schools bigger.

iii) Pretty much the same.

iv) Urban smaller, rural bigger.

v) In very high & very low FSM, smaller gap.

vi) O smaller, RI/I bigger.

6. FSM Boys worse, girls better. Ethnic groups: Chinese good, White Brits worse. Others similar.

The report muddies the waters a bit as it mainly looks at progress, whereas lots of the questions posed are about FSM/Non-FSM gaps. This is probably because politicians are fixated on ‘gaps’, whereas those who know a bit about education like to make a distinction between progress (also known as ‘growth’) and achievement.

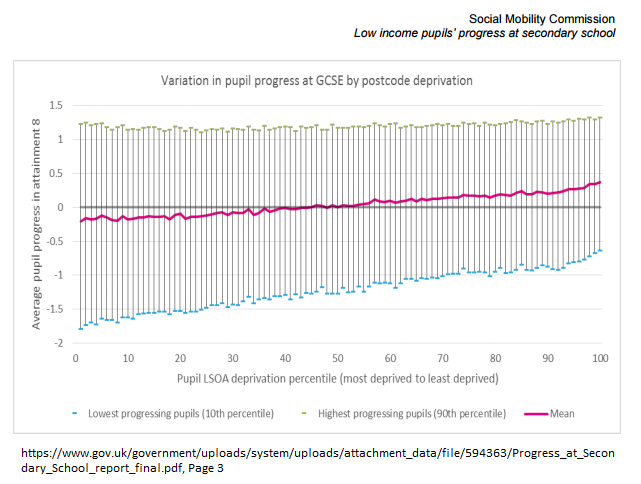

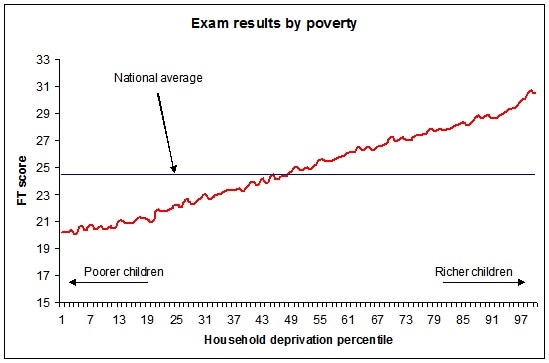

That said, those who claim that progress is somehow fundamentally different to attainment – I’m looking at you, Ofsted - should have a good look at this graph.

“Single tests may misrepresent pupils’ abilities because of a range of factors. () KS2 tests also have limited reliability. (There are) concerns that KS2 tests are not accurate measures of a pupil’s ability, and instead reflect the result of a period of intense preparation for those tests during Year 6.”

Nevertheless, mean progress and mean attainment decrease as deprivation increases. And whilst Progress 8 is clearly a better way of assessing school’s impact than raw attainment, as this useful blog from Edudatalab points out, P8 isn’t a measure of a school’s overall quality.

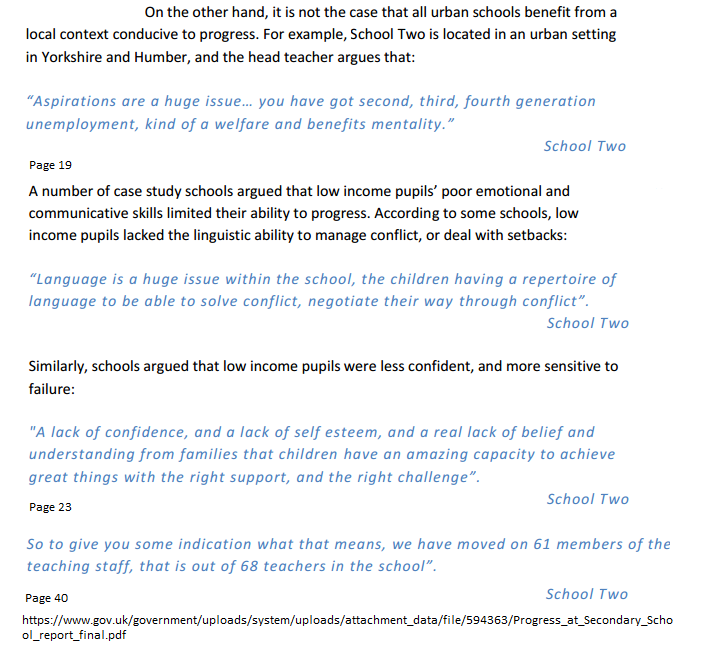

For those who worry about the ongoing recruitment and retention issues in education (i.e. everyone in education except the DfE), the following speaks volumes.

One of the really odd things is that, having established how pupils in receipt of FSM fare compared to their non-FSM contemporaries – essentially, on all measures, those who are poor struggle to compete with those who are not (unless you are poor and not White British, in which case things are slightly different) – the report then makes the following evidence-free turn:

“While the magnitude of the gap in progress at secondary school between low income pupils and their peers reveals a breakdown in the promise of education as a driver of social mobility, decisions and actions taken by schools can have a profound impact on outcomes.”

‘A breakdown in the promise of education as a driver of social mobility’ is a curious turn of phrase. It’s not new, of course, and there are many politicians who have asserted this kind of thing before. It’s a nice idea, naturally. But it isn’t based on any evidence; there’s certainly no evidence in the research data presented here that ‘education is a driver of social mobility’.

There’s also no evidence presented here that ‘actions taken by schools can have a profound impact on outcomes’.

The report asserts, without evidence, that the following are true:

· School culture is important

· Data is a valuable tool

· Being able to recruit the right teachers is a driver of success

· The teaching of pupils with SEND needs to be excellent

· Decisions about pupil grouping and resourcing have a profound impact

There’s plenty of anecdote, certainly. But before anyone gets suckered into thinking, “Yeah, Culture! Data! Right Teachers! (Not the 61 sacked teachers at School Two, obviously, they’re all the Wrong Teachers!) Teaching SEND excellently! Decisions!”, remember that this is not based on evidence of any kind whatsoever, certainly not in this report. Which is depressing. And whilst it all sounds like the right thing to do, there’s no reason to think that any of these things will magically ‘narrow the gap’, or ‘diminish the difference’ or deliver whatever snappy platitude politicians are using this week to discuss the challenges in education.

In summary, this report does some fairly standard gathering and analysis of data, and then takes a weird evidence free leap which isn't based on anything other than anecdote. To quote a current advert, "Gut feel, though, isn't it?"

Shout to the Top

Moving on, the Sutton Trust delivered its latest report, Selective Comprehensives 2017 on March 1st to coincide with national Secondary School offer day, and garnered a lot of press, appearing in The Independent, The Guardian, The Mirror and elsewhere.

Unfortunately, it is almost entirely unhelpful in its headline message, which, summarised in a press release is that ‘The top comprehensive schools for GCSE grades are significantly more socially selective than the average state school in England, according to new research published by the Sutton Trust today. The 500 non-selective state schools where pupils are most likely to get five good GCSEs take just over half the proportion of disadvantaged pupils taken by the average state school (9% v 17%) – and lead to a £45,700 house price premium.

The problem with this report is that, whilst it presents some evidence for its conclusions, it does so almost entirely selectively, using some weird data (which is nearly all policy-based evidence), and ignores a huge amount of other evidence which it really should be taking into account before pronouncing on education and lobbying government to change policy and practice. It is a perfect example of policy-informed evidence, at best.

It’s not unusual, when reading pretty much anything from the Sutton Trust, for the overriding thought to be that it completely ignores nearly all of the well-established understanding of the drivers of academic achievement in schools. As has been known since the Coleman Report fifty years ago, academic results are driven by a combination of school effects, peer effects and pupils effects. Pupil effects dwarf the others, and peer effects dwarf school effects. Yes, schools matter, but they are a small part of a much, much bigger picture.

The research in the Social Mobility Commission’s report mentioned earlier is simply the latest in a huge body of evidence stretching back to Coleman which consistently finds that the variation in attainment within any school is always massively larger than the variation in attainment between schools. Some children within the same school do well and some don't, and this happens in every school. This really shouldn’t be news to anyone writing about education.

What’s more, someone should really explain to the Trust that the academic results any school posts are the outputs of the children who attend the school. Change the children, you change the results. Outside London, secondary schools chock-full of well-off, well-supported, well-connected children end up by definition in the ‘high attaining schools’ (London is slightly different because… Well, you should know, really, if you are reading this. In a nutshell, those who work, live and go to school there are systematically different to those who live outside the capital). It’s a self-fulfilling prophesy – ‘this is the best school because it gets the best results because it has the most privileged children because it’s the best school’.

All this makes the opening paragraph of Peter Lampl’s foreword trivially true, but only if you accept the world view to which Peter Lampl and others like him subscribe:

Schools which educate children from chaotic homes in families struggling with day to day life, whose children opt for a non-degree-based career, doing a job which lots of ordinary people do, are by Lampl’s definitions not ‘successful’ or anywhere near ‘the best’.

The rest of the Sutton Trust report is hard to read, because the base prejudices are so prevalent and unquestioned. This really shouldn’t be a surprise, because as far as I know the Sutton Trust was set up in 1997 largely as a political campaign to bring back the Assisted Places Scheme, which had existed from 1980 until Tony Blair abolished it in… 1997. Since then, the Trust has broadened its horizons, and now has fingers in many pies. But the basic idea remains: If poor children could only attend the schools chock full of rich children, they’d storm the barricades, just as Peter Lampl did when he – the child, incidentally, of first generation immigrants, who’d passed the 11 plus in 1958, and gone to Oxford (i.e. not the poor disadvantaged underclass) – did.

Amazingly, the Assisted Places Scheme (or something like it) is back on the political agenda, along with selective education based on ability at 11 years of age, despite ample evidence that neither move would help many children who weren't from the affluent middle classes. That it is on the agenda is testament to campaigns run by the Sutton Trust and others, who have stuck doggedly to their prejudices for an extremely long time.

The report makes various recommendations which are entirely evidence-free:

1. More schools, particularly in urban areas, should take the opportunity where they are responsible for their own admissions to introduce random allocation (ballots) or banding to ensure that a wider mix of pupils has access to the most academically successful comprehensives.

The Trust has been banging this particular drum for 10 years or more, with very little success. There’s scant evidence ballots make any difference to outcomes (remember that children get the results, not schools), and, if anything, that they attract supported children rather than the disadvantaged.

2. Banding is most effective when a co-operative agreement can be reached between schools in an area.

3. Ballots can be used in conjunction with catchment areas to improve the diversity of intake.

Much of this is pure speculation, and combining catchment areas and ballots would simply give affluent parents more reason to select their child’s school via house price. Rather than being a sure-fire bet, ballots could be just as likely to have the effect of busing of under-privileged groups had in the USA following the Coleman Report, when affluent groups abandoned neighbourhoods as their schools began to educate children different to their own. The Sutton Trust doesn’t seem to know, or care, what might happen if ballots were introduced across the country.

4. Information availability and willingness to go the extra mile often has significant effects on access to better schools.

This is, once again, more evidence-free speculation.

5. It is particularly important that parents are aware not just of the school choices available, but of their rights to free transport.

There’s little evidence parents aren’t aware of this, but – yay – something which seems like a decent thing to do, even if it’s entirely unrelated to the actual research in the report.

6. Faith Schools need to look at their recruitment of disadvantaged pupils.

Which isn’t going to happen as a result of this woefully limp recommendation.

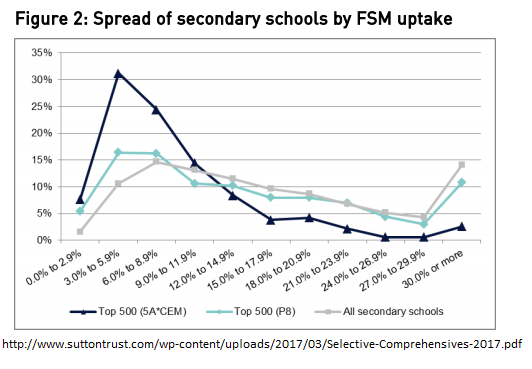

To be fair to the Trust, it has moved on a bit since its previous reports on ‘top schools’, in that this report considers progress as well as attainment. Lots of what the Trust calls ‘top’ secondary schools can’t show progress to match schools doing a good job with more middling intakes, mainly because of ceiling effects (their students can’t get more than the highest grade, often having started at the highest grades in Key Stage 2). The ‘top 500’ schools defined by attainment, unsurprisingly, have far fewer FSM eligible children (9.4% rather than the national figure of 17.2% pupils). When ‘top’ schools are defined by progress rather than attainment, the ‘top 500’ is much closer to the national average at 15.2%.

By the progress measure, children receiving FSM do get to access the Top 500 schools. Strangely, this doesn’t make it into the press release and, therefore, the news reports. Which is a pity, because the picture is actually pretty good when you look at the figures for Progress and FSM.

Of course, the Sutton Trust is right to say that great progress won’t get you into Oxbridge, and that elite professions tend to be dominated by the elite, but there are many clear reasons why those who are born poor struggle to compete with those born with silver spoons in their mouths. Those reasons don’t include, however, any evidence that their schools are doing a bad job, as demonstrated by the Trust’s analysis of the distribution of top schools for progress and prevalence of FSM children within those schools.

Unfortunately, the report visualises this using this doozy of a graph, which is verging on the statistically illiterate. This is not a time series, and the data isn’t continuous, so a line graph is ludicrous.

Another weird thing the Sutton trust report does is to create a bizarre ‘catchment’ statistic, which it then uses to suggest that the top 500 schools for attainment and progress are somehow unrepresentative of their local area. This is really odd, and seems to be policy-informed evidence of the highest order. It's worth reiterating the simple truth that state comprehensive schools don’t have catchment areas. Admission is based almost exclusively on straight-line distance from the school gate to pupil’s front gate, and has been for some time.

What the report has done is to create a statistic which counts any Lower Layer Super Output Area (a government defined geographical area which includes roughly 1,500 residents) if five children have been offered a school place at a given school within the last three years. This is just weird, because it could be affected by all manner of weird quirks which the statistic can’t account for. We just don’t know.

What we do know is that this gerrymandered stat – the epitome of policy-informed evidence - is used in the report to claim that ‘top schools’ are somehow unrepresentative of their local areas, even though this is pretty much meaningless. State comprehensives are, whether we like it or not, entirely made up of the children in their local area. If you don't live close enough, you can't get in. Nothing else can stop children like you getting in.

To suggest this somehow isn't the case suits the Sutton Trust’s agenda, however, which is to push the government to force schools to amend their admissions criteria and to introduce ballots. And as noted above, the main issue with this is that the report doesn’t explore how privileged parents might react to this moving of the goal posts, which might make the situation even worse for underprivileged children.

As regular readers will know, I’ve argued before that selection by house price is - contrary to received opinion - a good thing. Parents should not feel guilty for choosing to live in the most expensive neighbourhood they can, with the intention of gaining a place at a school they like. It is actually necessary that parents do exactly this, as it fulfils the dual function of giving parents some choice over their children’s school as well as putting pressure on schools to provide an education which parents want. If you have moved house to get your children into a particular school, are considering doing so, or would consider doing so in the future, you are acting exactly as you should, for the good of the system as a whole. Far from being morally wrong, it's essential that parents act in this way.

This report echoes the sentiments of Peter Hitchens and Theresa May, blurring the nature of selection in pursuit of its own agenda. It has led to various news reports which push the same dubious claim, that somehow selection by ability or random allocation is somehow better than selection by house price. It really isn't. It is, to use the current controversial phrase, verging on ‘fake news’, and is simply wrong.

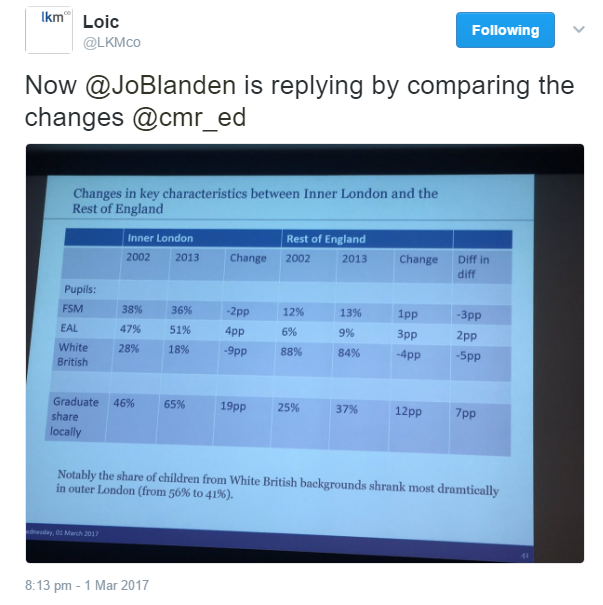

As an interlude to the flurry of reports published this week, Wednesday 1st March saw a fascinating debate at Centre for the Study of Market Reform of Education (Oh, I know...), on the so-called 'London Effect'. I’ve written about this before, and in summary, I’m with those of the view that London is completely unlike the rest of England, that the main effect is due to changing demographics and that a lot of nonsense is claimed about the factors which have changed education outcomes in Inner London.

Rather than trying to storm the barricades a la the Sutton Trust, pontificating from misguided first-principles, Teach First works in the schools which actually serve the disadvantaged in society, and works to find ways to support poorer pupils in their education and beyond. Whilst there are obvious criticisms to be made of Teach First, those who are part of the organisation have a great deal of first hand experience of the issues disadvantaged children face, unlike so many others commenting from afar.

The executive summary is a clear statement of intent.

Teach First’s recommendations are based on the evidence which they have collected on barriers which the disadvantaged actually face: They need good careers information, assistance in accessing Higher Education, and opportunities beyond school in order to break what the charity terms the ‘class ceiling’. More power to their elbow, I say.

The final publication which caught my eye this week was from the much maligned Ofsted. And the good news is that there is clear, tremendously useful evidence-informed advice and guidance for inspectors in the March 2017 School Inspection Update.

“As inspectors, we can help schools by not asking them during inspections to provide predictions for cohorts about to take tests and examinations. It is impossible to do so with any accuracy until after the tests and examinations have been taken, so we should not put schools under any pressure to do so – it’s meaningless. Much better to ask schools how they have assessed whether pupils are making the kind of progress they should in their studies and if not, what their teachers have been doing to support them to better achievement.”

“Finally, I know that schools have been appreciative of our move over two years ago to stop grading the quality of teaching in individual lessons. The evidence supporting the overall judgement for quality of teaching, learning and assessment has not been diminished by this move and it has helped support those schools that wished to stop grading individual lessons themselves. However, when observing lessons and feeding back to teachers afterwards, we must not give the incorrect impression that any graded judgement has been formed. In line with our training, we should only use our observations during individual lessons to establish strengths and areas for improvement that, in discussion with others on the inspection team, help to identify and synthesise the common strengths and areas for improvement across the school. In this way, the judgement of the overall quality of teaching, learning and assessment is agreed.”

For those of us who have lobbied Ofsted to ensure that inspectors are more data-literate, this is yet more proof that the Inspectorate are happy to take on board criticism and are acting quickly when people on the ground ask for their support. It's also good to see policy and practice based on evidence, rather than prejudice.

RSS Feed

RSS Feed